Researcher: Fan Zhang

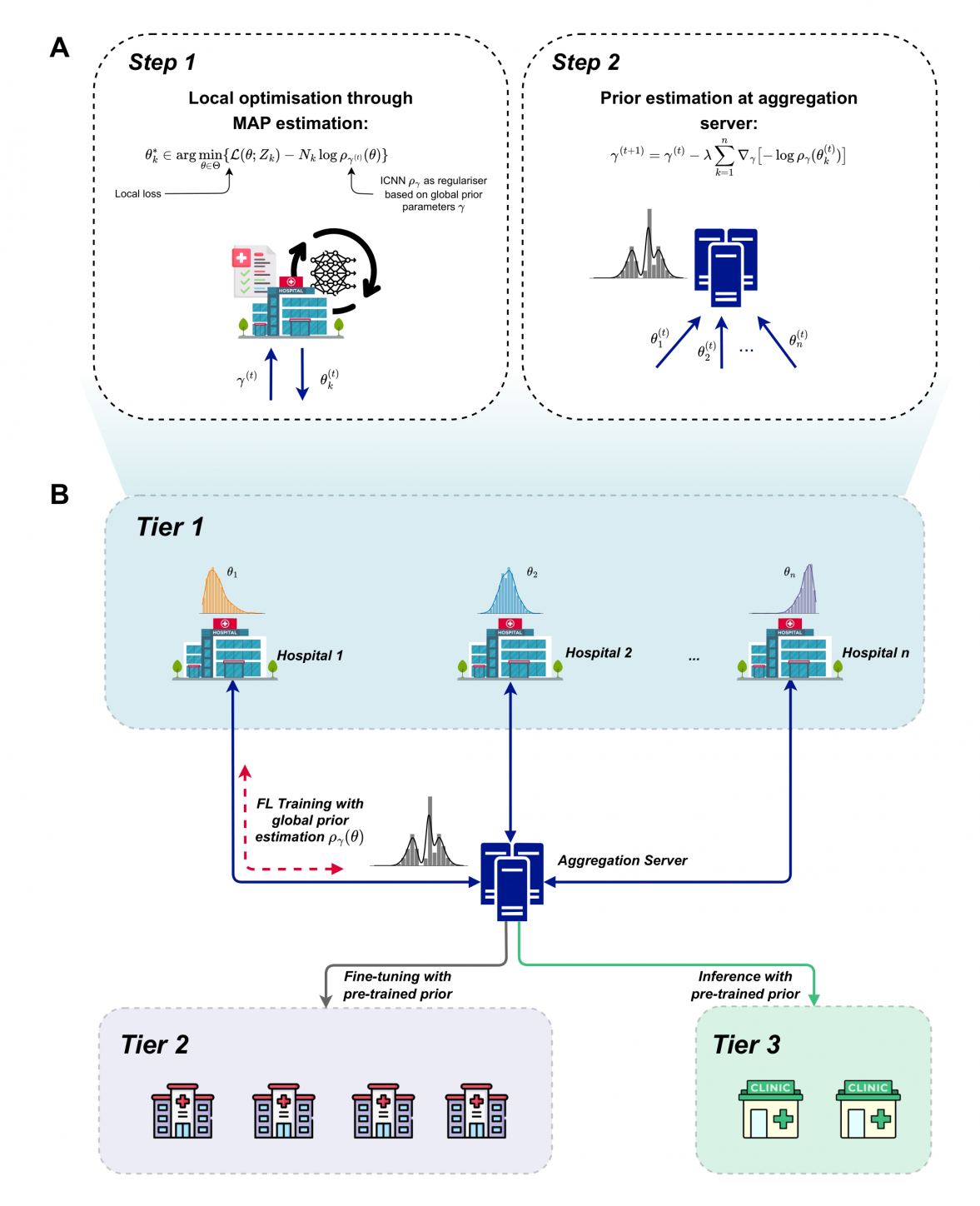

Recent advancements in healthcare research highlight the growing use of machine learning (ML) to enhance diagnostic accuracy and treatment outcomes. However, the development of effective ML models is hindered by data silos within healthcare institutions and the tightening of privacy and security regulations, which limit the feasibility of collaborative model training across hospitals. Federated Learning (FL), a new ML paradigm, offers a solution by allowing model training on data from multiple hospitals without transferring raw data, thus maintaining privacy and security. Despite the promising potential of FL, its practical implementation in healthcare has encountered significant limitations. These challenges arise from the heterogeneous nature of healthcare system infrastructure, the complexities associated with non-independent and identically distributed (non-IID) data, and the existing model aggregation methods, which encounter convergence issues when handling non-IID datasets. Furthermore, the lack of robust support for multi-task training within the FL framework further compounds these obstacles. This project aims to overcome these obstacles, advancing FL for real-world healthcare applications, setting new benchmarks for ML in healthcare, and encouraging the broader adoption of FL in managing sensitive data domains.